At BharatGen, we’re proud to unveil Param-1 (2.9B) an instruction-tuned foundational language model purpose-built for India’s agriculture domain. This model isn’t just a technical achievement, it’s a step toward democratizing AI for Bharat’s most foundational sector: Agriculture.

Architecture: A Lean Model with a Purpose

Param-1 is a 2.9B parameter decoder-only transformer model hosted on Hugging Face. Unlike general-purpose models, Param-1 has been fine-tuned from the ground up to understand, process, and generate agriculture-specific content tailored for Indian contexts, in both English and Hindi.

Data Preparation: Grounding the Model in Indian Agriculture

We knew that building a truly useful model meant building the right data pipeline first. Here’s how we approached it:

- 17,000 open-source agricultural news articles and knowledge passages were scrapped.

- Each passage was annotated with 5 diverse questions using an open-source LLM.

- We constructed a comprehensive India-centric taxonomy spanning crops, weather, soil, pests, fertilizers, market conditions, and government schemes.

- Created agricultural personas such as smallholder farmers, agri-extension officers, policymakers, etc.

- From this framework, we generated 2 million QnA pairs, each grounded in taxonomy and persona contexts.

- All content was translated into Hindi, ensuring bilingual robustness.

- Finally, we generated 6 million multi-turn conversations, simulating real agri-dialogues in rural and advisory settings.

The result? A massively domain-aligned dataset that captures not just facts, but also the intent and context of agricultural communication in India.

Training at Scale: From Raw Data to Instruction Finesse

Training Param-1 was a large-scale, precision-engineered process:

- Prompt Template: We designed a custom instruction prompt template optimized for multi-turn, context-rich inference.

- Training Framework: Leveraged Hugging Face with torchrun for multi-node distributed training.

- Training Samples: 12 million samples

- Test Set: 1.2 million examples

- Epochs: 3

- Scheduler: Linear with warmup

- Learning Rate:

- Base: 5e-6

- Min: 5e-7

- Batch Configuration:

- Global Batch Size: 1024

- Micro Batch Size: 4

- Gradient Accumulation: 32

- Tokens Added: <user>, <assistant>, <context>, <system_prompt> to enable clean instruction formatting

- Vocabulary Size: 256k + 4

This setup ensured efficient convergence, high instruction alignment, and strong multi-turn memory essential for the conversational use cases we’re targeting.

Evaluation Strategy: Beyond BLEU and Accuracy

Standard LLM benchmarks often miss the mark for specialized domains. That’s why we’re evaluating Param-1 on two complementary tracks:

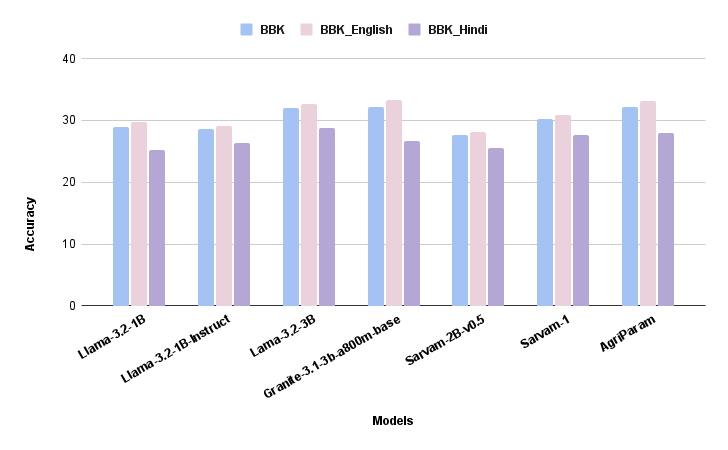

BhashaBench-Krishi (BBK): The Agricultural AI Gold Standard

We evaluate AgriParam-1 on BhashaBench-Krishi, India’s most comprehensive agricultural knowledge benchmark. Unlike generic benchmarks testing factual recall, BBK measures an AI’s ability to provide actionable, localized agricultural advice that Indian farmers actually need.

BBK’s Unique Value:

- Authentic Content: 15,405 questions from 55+ real government agricultural exams (NABARD, ICAR, MP RAEO to PhD entrance)

- Domain Depth: 25+ agricultural domains, 270+ topics covering soil science to government schemes

- Bilingual Testing: English (12,648) + Hindi (2,757) questions matching AgriParam-1’s capabilities

- Real-World Complexity: Easy/Medium/Hard questions plus diverse formats (MCQ, Assertion-Reasoning, Match-the-Column)

AgriParam-1’s BBK Advantages:

- Domain Specialization: Superior performance on Agricultural Biotechnology, Plant Sciences, and Agronomy vs. generic models

- Bilingual Consistency: Minimal English-Hindi performance gap unlike general LLMs (10-15% drops)

- Contextual Understanding: Excels at medium-complexity questions mirroring real farmer queries

- Regional Intelligence: Strong on Indian crop varieties, soil types, agro-climatic zones, and government schemes

What BBK Tests That Others Miss:

- Policy Navigation: Government schemes and subsidy eligibility

- Regional Adaptation: State-specific practices and crop calendars

- Seasonal Intelligence: Kharif/Rabi/Zaid-appropriate advice

- Practical Application: Scientific knowledge → actionable farming decisions

Why This Matters

Agriculture employs over 50% of India’s workforce, yet most LLMs today are optimized for global English-speaking audiences and generic use cases. Param-1 represents a shift in perspective: from global-first to Bharat-first, from general-purpose to sector-specialized.

Whether it’s powering Kisan helplines, chatbots for Krishi Vigyan Kendras, or voice assistants for rural India, Param-1 is our first step toward domain-native LLMs that serve real people with real needs.

📥 Want early access, partner with us, or contribute?

Follow our updates on Hugging Face

Got an issue or feedback? Our team is here to help.

Reach us at: